Reality is now the most hackable system on earth – and the people defending it are losing.

Picture this: a video appears showing a world leader announcing nuclear strikes. The audio is clean. The lighting matches the actual press room. The little mannerisms – the head tilt, the way he pauses before a hard word – are spot-on. You watch it three times and feel nothing but dread.

It never happened. The whole thing was generated by a machine. And here’s what keeps researchers up at night: most people who see that video will never find out.

“For thousands of years, humans operated on a single foundational assumption: if you saw it with your own eyes, it probably happened. That assumption is gone.”

We are living through a break in the history of human perception – quiet, technically complex, and genuinely alarming. The deepfake isn’t a novelty anymore. It’s a weapon. And the race between people who build synthetic realities and people who try to catch them has become one of the stranger conflicts of our time: a war fought in pixels, fought without borders, and fought without a clear way to win.

The generator always wins first

The basic math of this fight is lopsided in a way that should bother you. To cause damage, an attacker needs to produce one convincing fake. To prevent damage, a defender needs to catch every single one. There’s no defence that’s both accurate and scalable – and the attackers know it.

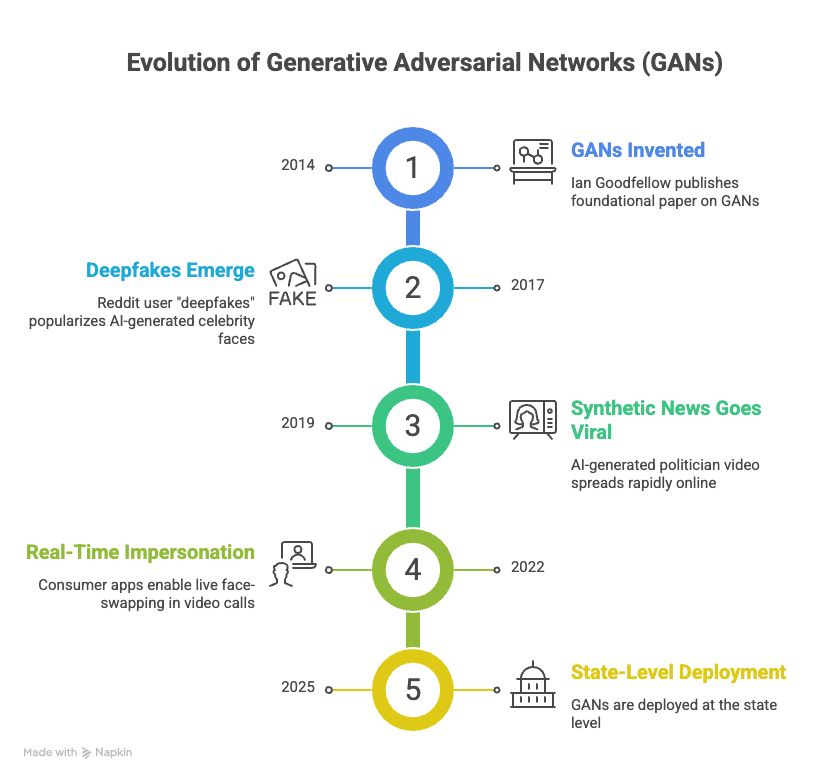

At the heart of most deepfake technology is something called a Generative Adversarial Network (GAN). Two neural networks, locked in a loop: one invents fake content, the other tries to flag it. They train against each other until the generator produces something the detector can’t distinguish from the real thing. Then the generator gets better. Then the detector catches up, briefly. Then the generator improves again.

This isn’t a bug – it’s the design. The architecture of the technology guarantees escalation. There’s no stable endpoint where detection wins and stays ahead.

The Liar’s Dividend

There is one underrated consequence of all this. Deepfakes give bad actors a new defence they didn’t have. Caught on camera doing something awful? Call it AI-generated. Even when footage is completely real, the existence of deepfake technology now provides a ready-made alibi. Truth becomes deniable. This is sometimes called the “liar’s dividend” – and it may be more corrosive than the fakes themselves.

How we got here

Who’s fighting back

The defenders aren’t helpless – just outpaced. Across universities, tech companies, intelligence agencies, and civil society organisations, a sprawling counter-effort has taken shape. It’s serious work, done by serious people. But it’s built on sand.

The detection playbook

Physiological tells. Deepfakes still struggle to replicate subtle biological signals – unnatural blink timing, pulse patterns visible in skin-tone fluctuation, micro-expressions that last a fraction of a second and look slightly wrong.

Content provenance. The C2PA standard embeds cryptographic signatures into authentic media at the point of capture, so authenticity can be verified downstream. Think of it as a chain of custody for digital content.

Compression artifacts. AI-generated video leaves statistical fingerprints in how pixels are compressed – invisible to the eye, detectable by algorithms trained to look.

Behavioural biometrics. Systems that model how specific individuals speak, gesture, and move can flag departures from baseline – even when the video and audio look perfect.

The problem with every one of these approaches is the same: the moment you publish how you detect something, you’ve handed the other side a training target. Researchers release a paper describing how they catch a specific GAN artifact. Within weeks, updated models produce content that avoids that artifact entirely. Detection is whack-a-mole against an opponent that reads every paper you write.

“This isn’t a technological arms race. It’s an epistemological one. The question isn’t whether we can detect fakes. It’s whether anyone will believe the truth anymore — even when we can prove it.”

The part that’s actually frightening

The most unsettling finding from deepfake research isn’t technical. It’s psychological, and it’s simple: humans are bad at this. When people are tested against modern deepfakes, detection accuracy hovers barely above chance. Not that far from a coin flip.

And weirdly, knowing that deepfakes exist doesn’t make people more accurate – it makes them more paranoid. People start rejecting real footage as fake. Researchers call this the inverse of the liar’s dividend: uncertainty spreads until nothing feels trustworthy, including the truth.

The victims aren’t gullible. Deepfake fraud has caught experienced journalists, security professionals, and intelligence analysts. In an environment of genuine uncertainty about what’s real, even sophisticated people learn to doubt everything – which is, arguably, the whole point.

Platforms have made pledges. Most have built automated systems to catch the worst cases – intimate imagery, obvious election manipulation. But the numbers don’t work. Billions of pieces of content go up every day. The math of enforcement at that scale has no solution.

The platform paradox

Social media platforms make money from engagement. Deepfakes are extraordinarily engaging – emotionally provocative, designed to spread, built to bypass the part of your brain that slows down and asks questions. The business model that amplifies synthetic reality is the same one that funds the infrastructure supposedly fighting it. That tension hasn’t been resolved. It probably can’t be.

A future without certainty

Here’s the uncomfortable truth: we’re not going to build our way out of this. A better detector will be met with a better generator. The arms race has no endpoint – just escalation. Anyone who tells you otherwise is selling something, or hasn’t thought it through.

What this means, practically, is that society has to change how it establishes trust. For centuries, we built institutions – courts, journalism, government, science — to serve as arbiters of what’s real. Those institutions are now being pressure-tested by a technology that can fabricate evidence indistinguishable from reality, at scale, on demand.

The solutions that might actually work aren’t purely technical. They involve rebuilding the social infrastructure of trust: media literacy taught at scale and early, provenance standards that become as unremarkable as SSL certificates, legal frameworks that treat synthetic fraud seriously, and something harder to legislate – a cultural habit of epistemic humility. The willingness to say, out loud, “I don’t actually know if this is real.”

“The goal of a deepfake isn’t necessarily to make you believe a lie. It’s to make you incapable of believing anything at all.”

Bottom line

“Seeing is believing” was never airtight. Eyewitnesses misremember. Photos can be staged. But it was a workable heuristic – a good-enough shortcut for navigating a world mostly made of real evidence.

That shortcut is broken. Not bending – broken. The deepfake arms race isn’t a story about technology. It’s a story about who gets to define reality, and what happens to societies that can no longer agree on what they’ve seen.

The arms race continues. Somewhere, the next generation model is already in training.